Generated Reality

Interactive real-time world simulation in VR. Read more about it in my publications.

Interactive real-time world simulation in VR. Read more about it in my publications.

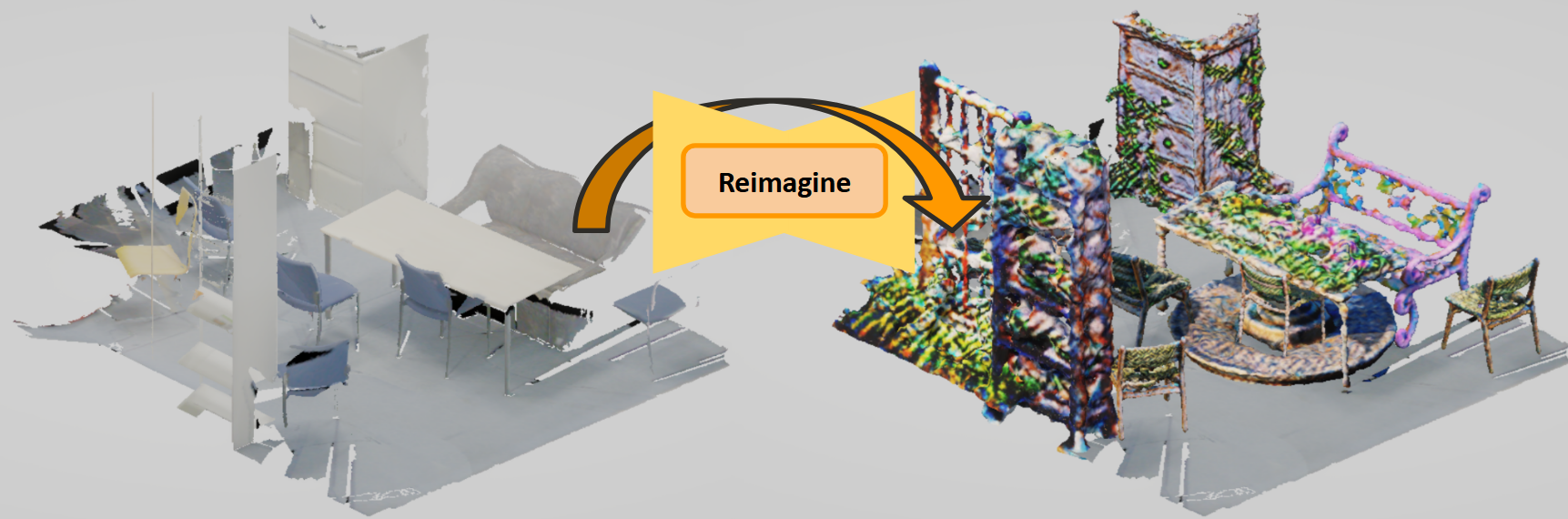

Reimagine 3D scenes using part-level semantic segmentation and locally-controlled 3D asset generation. Collaboration with Stanford Gradient Spaces Lab

Undergraduate Honors Thesis

Multi-modal SLAM algorithm using 3D Gaussian Splatting. Read more about it in my publications.

Undergraduate Senior Design Project

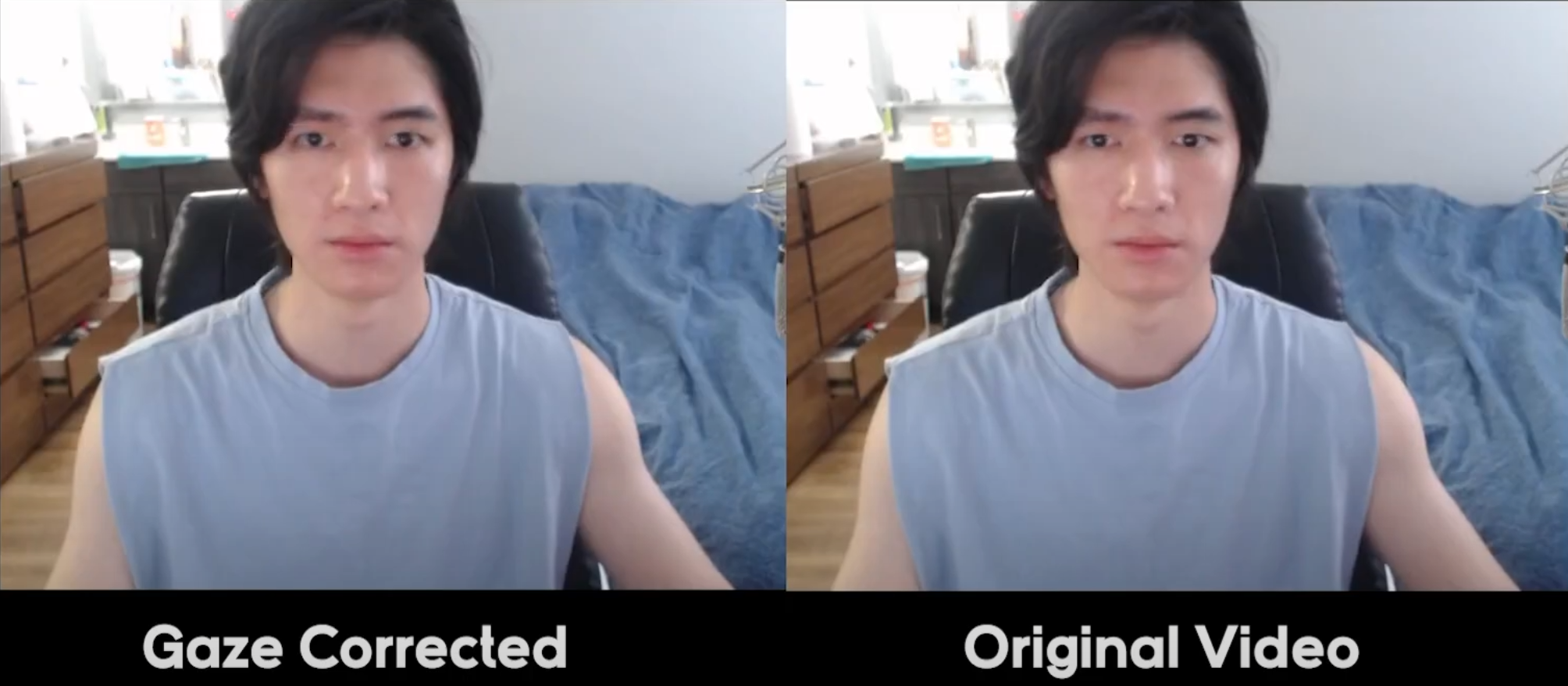

AI model for redirecting eye gaze in real-time.

Recreation of Minecraft, complete with full rigid body physics and implemented portals. Computer Graphics final project.

Training pipeline to stylize 4D NeRF models with an input style image. Computer vision final project.

Fall 2021 EE445L Competition Winner

AR headset from scratch under $80.

ZMK-powered BLE split keyboard with custom-designed PCB and chic 3D-printed case. Successor to my previous project, the Hana Gamepad.

TAMUhack 2021 Best Hardware Hack Winner

Hands-free water faucet using stepper motors and laser TOF sensors.

Published in 2023 IEEE/ION Position, Location and Navigation Symposium (PLANS), 2023

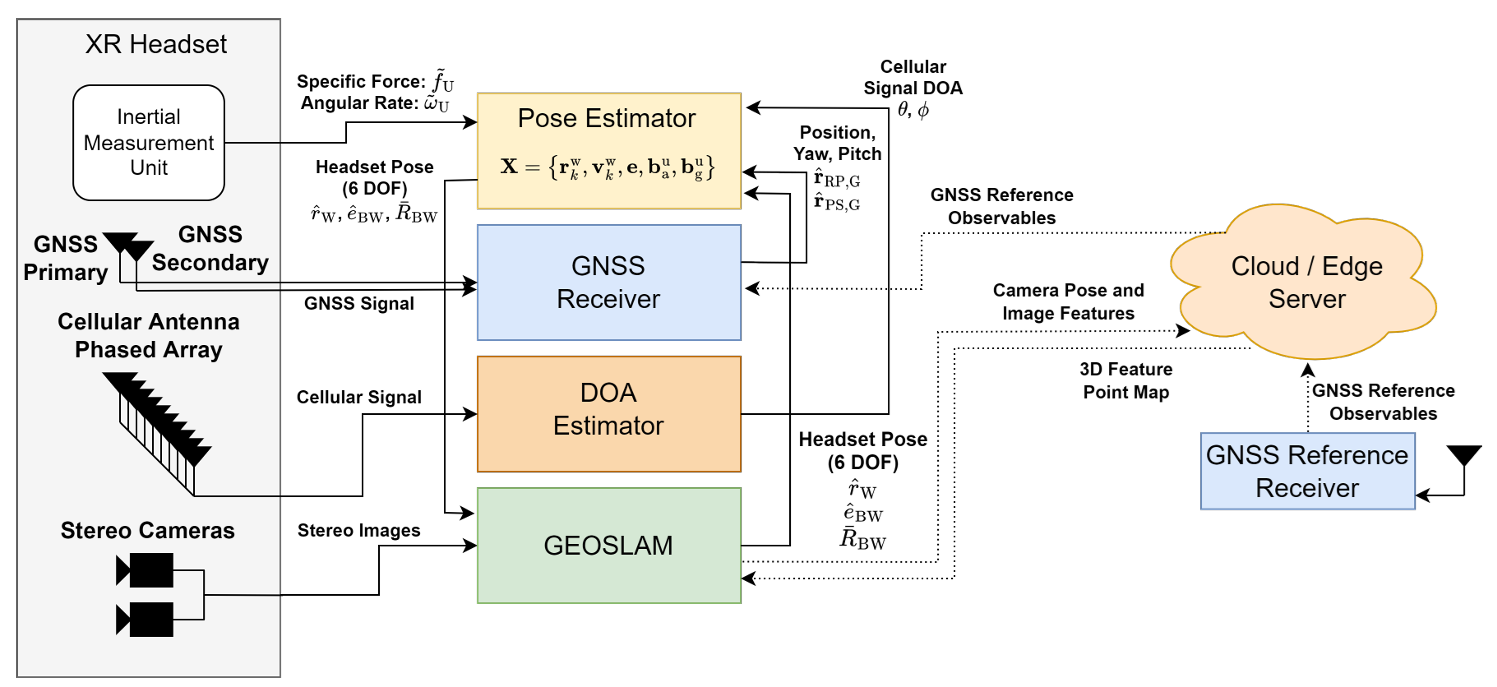

This paper presents a novel headset tracking frame-work designed for extended reality (XR) applications. By loosely coupling a visual simultaneous localization and mapping (SLAM) algorithm to a tightly-coupled carrier phase differential GNSS (CDGNSS) and inertial sensor subsystem, the proposed system aims to achieve centimeter-accurate, globally-referenced tracking that persists during extended periods of GNSS degradation. Collaborative and persistent XR experiences are enabled through accurate map creation utilizing a bundle adjustment approach for map generation and maintenance. Cloud or near-edge offloading of computationally demanding steps in the pipeline is explored to reduce the computational demand on the headset. This paper also explores the benefit of additional headset tracking constraints offered by direction-of-arrival measurements to nearby cellular base stations.

Published in 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2024

Our method, MM3DGS, addresses the limitations of prior neural radiance field-based representations by enabling faster rendering, scale awareness, and improved trajectory tracking. Our framework enables keyframe-based mapping and tracking utilizing loss functions that incorporate relative pose transformations from pre-integrated inertial measurements, depth estimates, and measures of photometric rendering quality. Experimental evaluation on several scenes shows a 3x improvement in tracking and 5% improvement in photometric rendering quality compared to the current 3DGS SLAM state-of-the-art, while allowing real-time rendering.

Published in arxiv, 2026

We introduce a human-centric video world model that is conditioned on both tracked head pose and joint-level hand poses. For this purpose, we evaluate existing diffusion model conditioning strategies and propose an effective mechanism for 3D head and hand control, enabling dexterous hand-object interactions. We train a bidirectional video diffusion model teacher using this strategy and distill it into a causal, interactive system that generates egocentric virtual environments. We evaluate this generated reality system with human subjects and demonstrate improved task performance as well as a significantly higher level of perceived amount of control over the performed actions compared with relevant baselines.

Published:

This is a description of your talk, which is a markdown files that can be all markdown-ified like any other post. Yay markdown!

Published:

This is a description of your conference proceedings talk, note the different field in type. You can put anything in this field.

Undergraduate course, University 1, Department, 2014

This is a description of a teaching experience. You can use markdown like any other post.

Workshop, University 1, Department, 2015

This is a description of a teaching experience. You can use markdown like any other post.